-

SOUND DESIGN IN HORROR

Why do horror games make us anxious before we even see the threat? The answer is sound. This project argues that audio isn’t just support. It’s the core of how fear works in horror games. Players react to what they hear before they even understand what’s coming. A breath. A crack. A low rumble. Those sounds hit the body first. They create fear before the brain has a chance to think.

This project looks at The Last of Us Part II. It’s a great example of how horror games use sound to shape emotion and control tension.

Ambient noise. Silence. Distorted effects. Sounds that react to the player’s choices. The game uses all of these to make you feel something before you even know why.

That’s what makes it work! Not just realism. But how the sound pushes the story and the gameplay forward.

Horror audio has changed a lot over time. Older games were loud and obvious. Jump scares and static. Now things are more layered. AAA games use spatial audio, environmental sound, dynamic shifts. Indie games take the opposite route. They use silence or weird glitches. Both styles mess with you. Both create fear.

This game lands somewhere in the middle. It uses silence and density. It lets audio build fear in subtle ways!

Sound in The Last of Us Part II does more than add mood. It drives survival. Clickers respond to noise. Breath sounds tell you when a character is panicking. Silence warns you that something is wrong. Sound isn’t just part of the environment. It is the environment!

Studies back this up. According to the Audio Uncanny Valley article, distorted or unclear sound is often scarier than clean audio because the brain struggles to process it. A study by Toprac and Abdel-Meguid found that players react physically to sudden changes in volume and pitch. That’s what makes horror sound powerful. It works in the body, not just in the mind.

This project uses three methods to break that down.

Waveform analysis shows how sound gets louder or quieter to build suspense.

Transcription analysis looks at how whispery or emotional voices confuse automatic tools.

Spectrograms show what frequencies are being used during tense scenes.

All of this shows how horror audio works. Not just for atmosphere, but as the thing that actually builds fear.

This topic is also personal. I always noticed I was getting scared before anything happened in the game. No jump scare yet. No monster. Just this feeling. Later I realized it was the sound doing that! That stuck with me, and it’s why I chose to dig into it. Sound doesn’t just support horror. It creates it.

WAVEFORM ANALYSIS

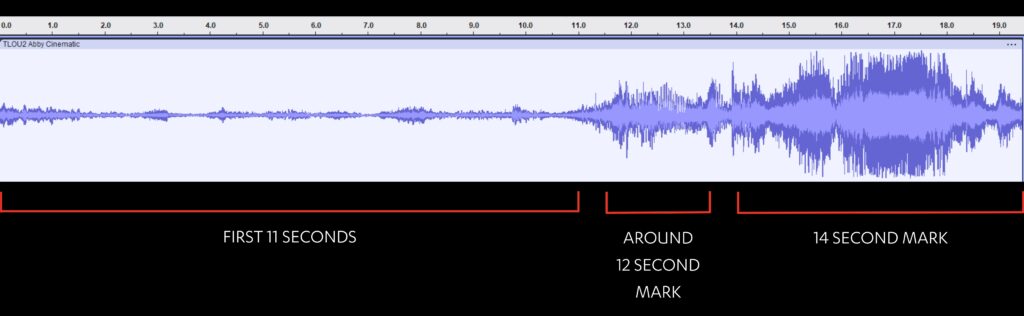

One of the clearest ways to show how sound builds fear is by looking at the waveform. In this 19 second clip from The Last of Us Part II, Abby enters an ambulance while searching for a med kit for Yara.

Right at the start, within the first second, there’s a sharp tense audio cue. It’s short, but it instantly signals that something is about to go wrong. According to Toprac, horror games use subtle or minimal sounds to create psychological discomfort before the visual threat even appears. This early cue sets that up perfectly.

From 0 to 11 seconds, the waveform shows low amplitude, but you can still hear things happening. There are subtle croaking and clicking sounds from the infected off screen. You also hear Abby’s breath and movement. Each time the croaks intensify or shift closer, the waveform spikes just a little more. That slow rise builds tension not through music but through ambient noise. This matches what Richter says about off screen sound being used to “create threat from absence,” where the lack of a visible enemy makes things even more stressful.

At around 12 seconds, the shift becomes clear. The waveform rises fast. By 14 seconds, it’s full of jagged, chaotic spikes. That’s when the Rat King reveals itself and charges at Abby.

You haven’t seen it before this, but the sound already told you something horrible was about to happen. Now it fully erupts.

WIRED explains that distorted or sudden audio actually triggers a physical fight or flight response before the brain fully understands the sound.

That’s why this moment feels so intense even though we barely see anything yet.

This contrast between the quiet eerie buildup and the explosive charge shows how horror audio works. It doesn’t just support the visuals. It sets the emotional pace. The waveform reveals that structure. It follows something like an attack curve from ADSR, where tension builds slowly until it peaks hard. Even without dialogue or music, fear is created through ambient noise, breath, and silence. Nightmares and Soundscapes describes how horror games use acoustic ecology to make sound feel like part of the environment itself. That’s what this clip does. You feel the Rat King before you see it. The waveform makes that fear visible.

TRANSCRIPTION ANALYSIS

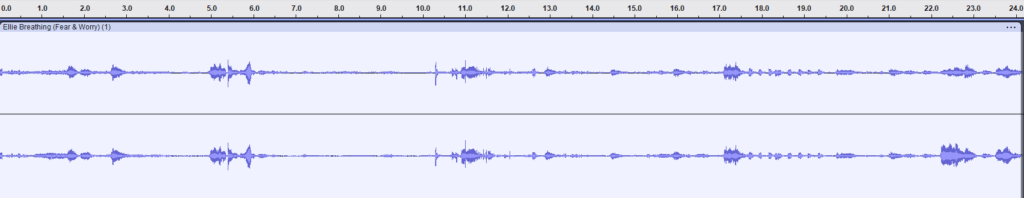

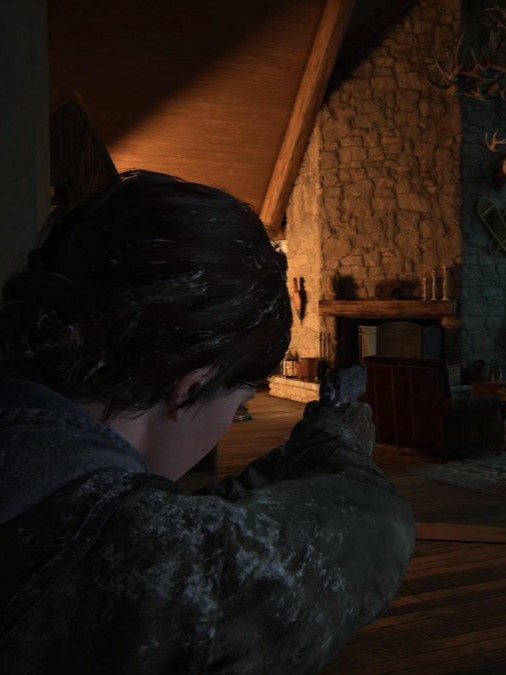

In this 24 second clip from The Last of Us Part II, Ellie enters the ski lodge where Joel is being held by Abby and her group.

She walks cautiously through the second floor, breathing shakily as distant yells and thuds echo in the background. At 4 seconds in, she whispers to herself, “Where is that coming from?” Her voice is low, unsteady, and almost blends into the ambient sound. Then at 16 seconds, as she quickly stomps down the stairs, she calls out, “Joel,” rushed, breathy, and full of fear. Her footsteps grow louder and faster as she nears the door where Joel is being held.

Otter.ai’s automatic transcript doesn’t catch much of this. It reads:

Unknown Speaker 0:00

Where

Unknown Speaker 0:05

is that coming from?

Unknown Speaker 0:17

Joel Oh,

Transcribed by https://otter.aiThe “Joel Oh” line is a clear mistake. She says just “Joel,” but the tool adds “Oh” trying to fill in a gap. Her emotional tone is totally stripped out. The breathing, the urgency, the heavy footsteps, none of that is captured. Even though the actual words are short, the way she says them is where the emotion lives, and that’s exactly what gets lost in transcription.

This failure supports what Donnelly and Garner explain about sound in horror games. They argue that fear in games often comes from acoustic ecology, a design method where sounds feel embedded in the environment, unclear and unsettling.

That’s exactly what’s happening in this clip. Ellie’s fear is built from unstable breathing, environmental yells, and unclear footsteps, not clean dialogue. The machine doesn’t recognize it because it’s not built to read fear the way a human ear does.

Toprac and Abdel-Meguid also emphasize that whispering and unclear audio are used to create anticipatory fear. In their analysis of horror sound design, they show that distorted or subtle audio is meant to confuse both the player and the character.

Ellie’s whisper “Where is that coming from?” is not just a line of dialogue. It’s a moment of shared panic between character and player. That feeling is completely missed when transcribed literally.

Transcription tools like Otter.ai are great with podcasts or interviews. Not horror. These tools assume clean, foregrounded speech, not whispered, breathy fear. When Ellie’s footsteps speed up and her voice shakes, that’s part of the horror. But software can’t feel tension. It can’t register panic. It only hears part of the message.

That’s the bigger point. Horror sound design isn’t built for transcription. It’s built to be felt. The fact that this kind of audio doesn’t transcribe well isn’t a flaw. It proves how powerful the sound is. The emotion lives between the words. Audio isn’t background. It’s the tool of survival, tension, and storytelling.

METADATA ANALYSIS

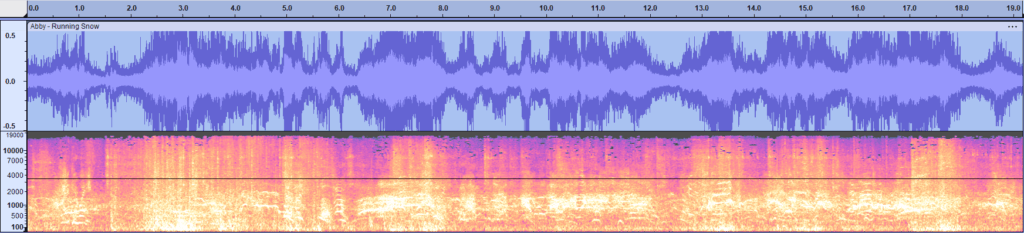

This 19 second sequence from The Last of Us Part II captures Abby’s escape from a horde of infected.

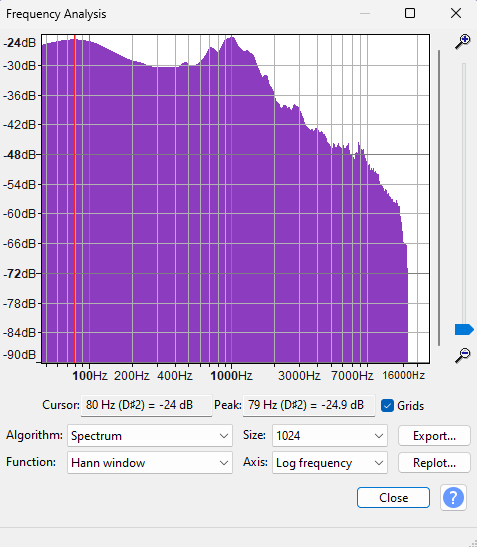

The sound is chaotic, urgent, and dense. Using Audacity’s spectrum analysis, we can see exactly how this tension plays out in the audio data. The waveform shows almost constant high amplitude with quick shifts and no room to breathe.

The spectrogram reveals thick textures. Sound is stacked across frequencies, with intense energy in the low to midrange (under 2000 Hz). That’s where footsteps, banging metal, screams, and Abby’s breath live. It’s all packed tight together.

In the frequency analysis, there’s a strong peak around 79 to 80 Hz at about –24 dB. This kind of low end intensity usually signals heavy movement or impact.

Across the 100 to 2000 Hz range, the spectrum stays active, showing how layered the environment is. Wind, groans, rattling fences, all crowding the player’s hearing.

Above 4000 Hz, the levels drop off hard, which fits how horror design often avoids crisp, clear sound here. It’s muddy and overwhelming on purpose. That lack of sonic clarity is a tactic. You can’t “hear your way out.” It traps you in the moment.

This moment matters because the sound metadata shows how horror isn’t about melody or even traditional music. It’s about pressure. The game forces the player to react through sound. You don’t just hear Abby say “shit” or yell “ahh.” You feel boxed in by the low end chaos and distorted noise. Sound is driving the action, not just reacting to it. Even without visuals, the data reveals that something terrible is happening.

What’s powerful here is how the frequency info supports the scene’s emotion. High density, low frequency audio pushes stress on the player. This backs up what researchers like Toprac argue that horror audio activates the body first, not just the brain. And as Nightmares and Soundscapes explains, audio in horror games now carries texture and weight meant to physically disorient the player. The metadata isn’t just numbers. It’s evidence that fear is being engineered by sound.

TIMELINE

To understand how horror game sound got to the level we hear in The Last of Us Part II, we need to look at how it started and changed over time.

The timeline shows important moments in horror games that helped shape how sound is used to create fear and pull players into the game. Each entry highlights major innovations that pushed horror audio toward more cinematic, emotionally charged design, something that TLOU2 uses with precision.

For example, Resident Evil (1996) is often seen as a major moment that changed things.

As Leamcharaskul notes, its “sparse music, sudden audio cues, and ambient unease” created a soundscape that amplified isolation and dread.

This early reliance on atmosphere over dialogue helped establish a baseline for emotional horror sound design.

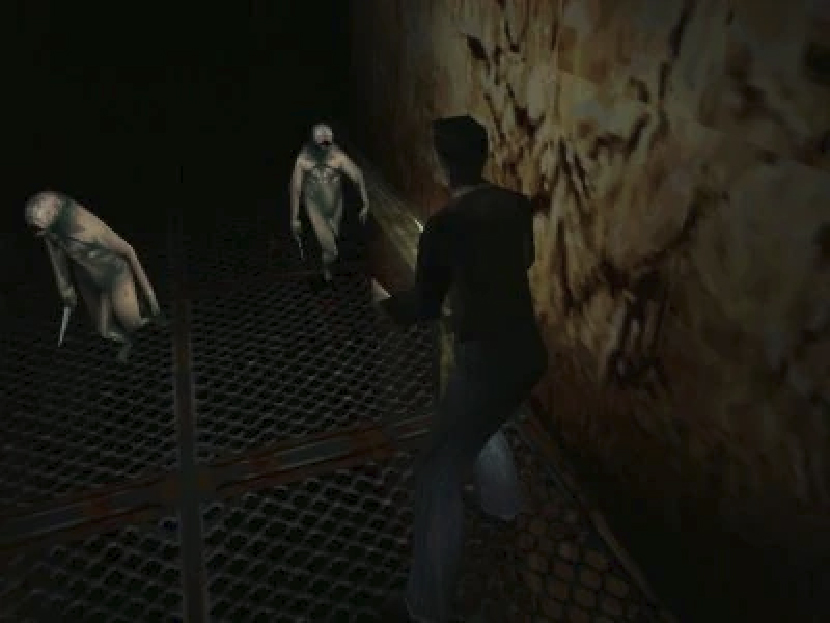

When Silent Hill (1999) introduced layered industrial noise and psychological audio design, it went even further. The use of low frequency tones and distorted ambient loops demonstrated how horror sound could reflect a character’s mental state. Toprac emphasizes how games like this began shifting away from musical cues and leaned more on diegetic, in world sounds to generate tension.

As horror games matured, titles like Dead Space (2008) embraced full soundscape immersion. The game’s dynamic audio system would adapt based on the player’s actions, using proximity based monster sounds and environmental cues to trick the player’s perception of threat.

This influenced a generation of horror designers to embrace player triggered audio feedback, something that can clearly be seen in TLOU2’s dynamic enemy proximity noises and breathing patterns.

The Last of Us (2013) marked a shift into narrative driven survival horror with highly detailed ambient audio.

A WIRED article explains how the game emphasized subtle environmental layers like the creak of old buildings or faint distant screams, which elevated the tension without needing music.

That technique becomes even more refined in Part II, where silence, breath, and proximity audio are carefully balanced to manipulate player emotion.

Finally, in The Last of Us Part II (2020), all of these historical techniques converge. You hear Abby’s panicked breathing, the specific placement of infected snarls through 3D audio, and environmental interference like wind and rain, all working together to craft deeply immersive moments.

As Cutting notes in her interview about The Quarry, cinematic horror game audio “needs to feel real but hyper detailed,” which applies just as much to TLOU2 even though it came earlier.

The historical progression shown in this timeline helps illustrate how we got from static audio loops to dynamic, emotionally manipulative soundscapes. The tools and techniques used in older games laid the groundwork for how The Last of Us Part II achieves its visceral horror.

OUTRO

All of this points to one thing. Horror games do not just scare us with what we see. They scare us with what we hear before we even know why. In The Last of Us Part II, sound is not just background. It is how fear is delivered. The waveform shows tension rising before a threat appears. The failed transcription proves that emotion in horror lives between the words. The spectrogram reveals that fear is not clean. It is layered, muddy, overwhelming. And the timeline shows this did not happen by accident. It is part of a long history of horror sound evolving toward emotional precision.

Sound creates fear in the body before the brain can catch up. That is what makes horror games different from movies. You are not just watching a scare. You are inside it, trying to survive. That is why this matters. Because horror audio does not just tell the story. It is the story. And in games like TLOU2, every breath, every silence, every distant groan is another line in the script. One you feel before you even hear it.

WHY SOUND IS THE REAL SOURCE OF TERROR IN HORROR GAMES